Which means that when H0 was true, instead of incorrectly rejecting 5% of the time, you incorrectly reject effectively 100% of the time.Ĭonversely if you used the last row when you should have used the first row, the type I error rate would be essentially 0, which would mean you'd have almost no power to detect even huge differences. on the table I have handy), would lead to a type I error rate of not 0.05 but so close to 1 as to make no practical difference (1.85x10 -66 shy of 1) with a chi-squared test, using the first row of a typical chi-squared table instead of the last row (120 d.f. using the wrong d.f.) would lead to not having the correct type I error rate - your claimed significance level, alpha, could be much higher or lower than you thought it was. If you look at the leftmost curve, its 95th percentile is below 4, but the red curve hasn't even reached its mode by then.

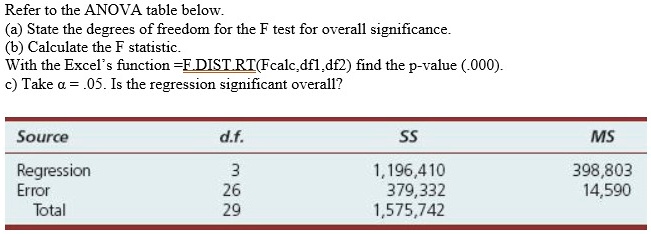

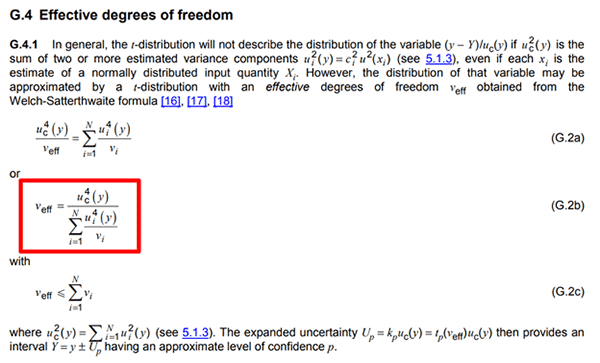

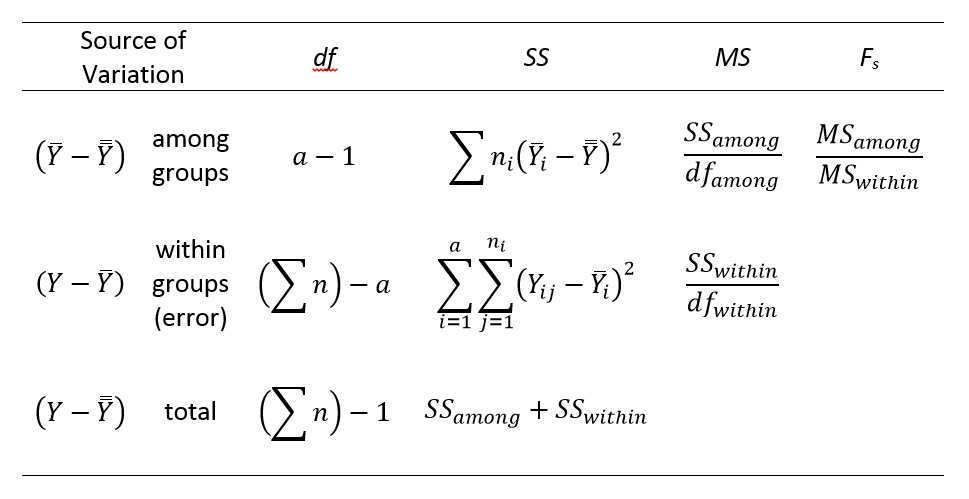

See how the tails of each curve differ they're not even in the same place. If the direct link to the wikimedia image doesn't work, its the top image here (Geek3, CC BY 3.0, via Wikimedia Commons ) and the shape of the distribution of the test statistic changes. It's relevant in inference, like hypothesis testing and confidence intervals.įor example, for chi-squared, t and F tests, change the d.f. Like, how would the statistic be wrong if we didn't use the proper degree of freedom? What would change? When we have more data, we have a better estimate of the right number to use for the standard deviation, and so we don't have to make our confidence intervals "as extra wide" to account for this uncertainty.ġ I say "sort of" because discussing the correct interpretation of confidence intervals is somewhat tedious, and you don't want to get into that right now. The t distribution was designed to make confidence intervals wider when we have less information about the value of the standard deviation. You can think of a standard error as roughly meaning "a common sized difference between an estimate and the truth when studying data like this".Ģ) As our sample size increases, ceteris paribus, so do our degrees of freedom. As n increases, standard error drops, meaning our precision increases. 99 to 101 instead of 90 to 110) when we get more information about the thing we are studying.įor means, this additional information is about 1) the mean and 2) the standard deviation.ġ) The "precision" of our estimate of a mean can be captured with the "standard error". This range of values where we think it is can get narrower (e.g. Basically, when we make a confidence interval we are sort of 1 saying that "We think the thing we are estimating is in this range of values". I am not going to type the confidence interval formula here you can watch my videos or check 1,000's of books and websites for that. If we assume degrees of freedom is 30 (n=31), then the 95% confidence interval is 100 ± 3.668. If we assume a degrees of freedom =1 (n=2), the 95% confidence interval is 100 ± 89.8464. For example, suppose we had a sample mean of 100 and sample standard deviation of 10. Going from df=1 to df=30 makes a huge difference in the width of a confidence interval. How much would it change? It depends on how wrong we want to make the degrees of freedom. What if we used the wrong degrees of freedom? The "statistics" would be right, but confidence intervals and inferences would be wrong. As your sample size increases, you get better information about both of them, and the degrees of freedom captures the precision of the estimation of the standard deviation (or variance). The t distribution was invented by William Gosset to take into account that to make a confidence interval of something like a sample mean, you usually know neither the mean nor the standard deviation. In this case it is a measure of how much information we have to estimate the variance of the parameter of interest. In the particular case of the t, it is much easier. If you use the wrong degrees of freedom, you are calculating the wrong area under the wrong curve to calculate things such as p values. In general, "degrees of freedom" is a term that is hard to grasp, and the best way to think about it is "It (or they, in the case of the F) is just a number that tells us the shape of a distribution".

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed